AI Engineer Interview Prep - QA 2

Question

How do you handle the large memory requirements of KV cache in LLM inference?

Answer

Handling large KV Cache memory requirements in LLM inference is crucial for high-throughput serving and long context windows, as the cache size grows linearly with sequence length and batch size.

We can handle large memory requirements of KV Cache in LLM inference using techniques like PagedAttention, Grouped-Query, Multi-Query Attention, Quantization, or Cache Offloading.

PagedAttention manages the cache using fixed-size blocks like an operating system’s virtual memory, reduces memory fragmentation, and enables higher batch sizes.

Grouped-Query Attention (GQA) or Multi-Query Attention (MQA) decreases the size of the KV cache by reducing the number of Key/Value heads.

Finally, strategies such as quantizing the KV cache (e.g., to INT8) or offloading inactive cache data to cheaper storage (like CPU RAM) also help manage the memory footprint effectively.

Detailed Explanation

When a Large Language Model (LLM) generates text, it internally stores Key (K) and Value (V) vectors for every token it has processed.

This stored information is known as the KV Cache. The KV Cache allows the model to attend to previously generated tokens efficiently, but it also introduces a serious scalability challenge.

As prompts become longer, the number of tokens increases, and the KV Cache grows accordingly.

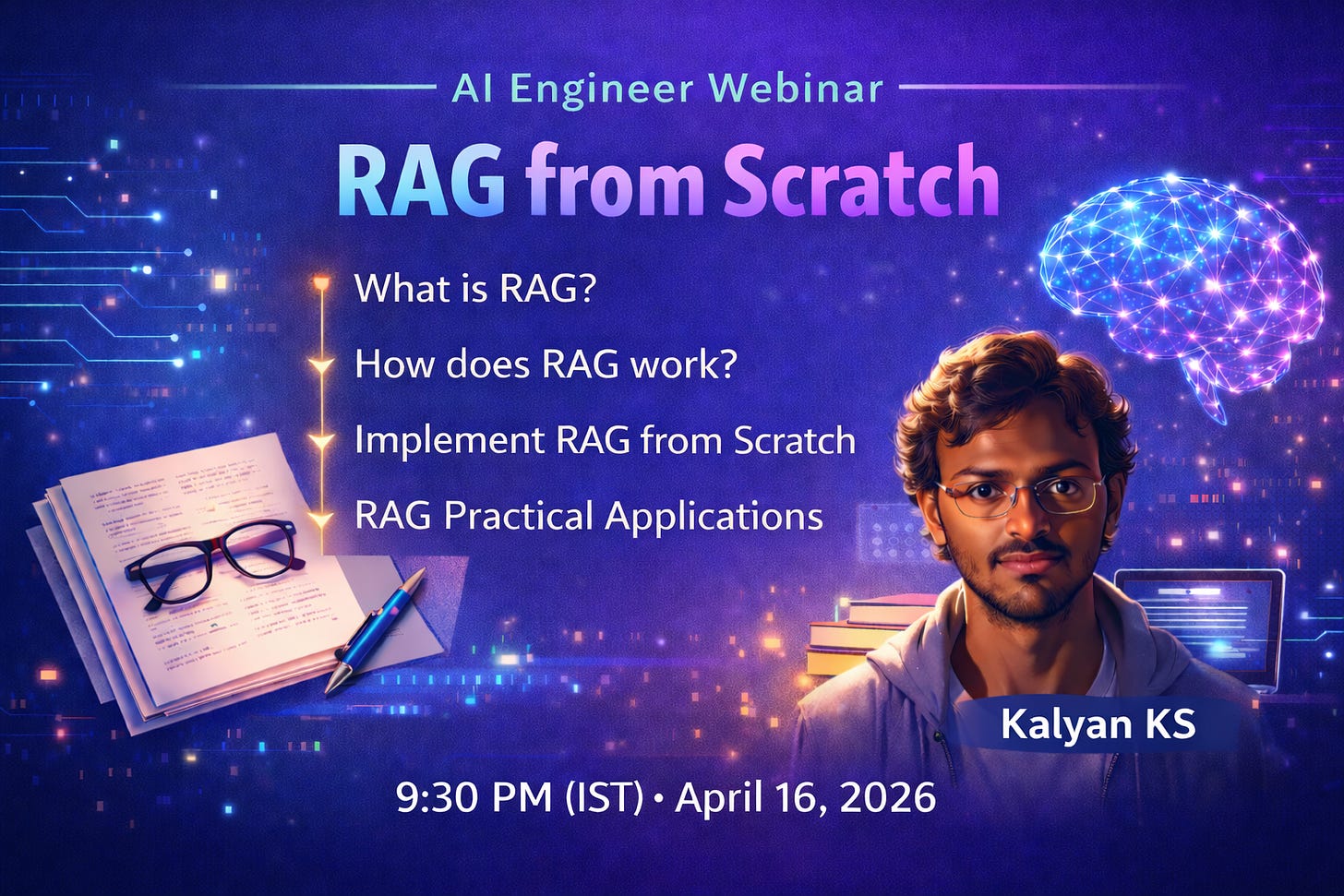

RAG from Scratch (Webinar)

In this webinar, you will understand and implement RAG from scratch without using any frameworks like LangChain or Llama Index.

This webinar covers

What is RAG?

How does RAG address LLM limitations?

How does RAG work? ( Indexing -> Retrieval -> Augmentation -> Generation)

RAG implementation from scratch without any frameworks

Practical RAG Applications

RAG Limitations

➡️ Register

PagedAttention — Virtual Memory for the KV Cache

PagedAttention treats GPU memory much like an operating system treats RAM. Instead of allocating one large, contiguous block of memory for the KV Cache, it divides the cache into fixed-size blocks, or pages.

These pages are stored compactly to avoid unused gaps in memory, and they can be dynamically moved in and out of GPU memory as needed.

This approach prevents fragmentation and makes much more efficient use of limited GPU resources.

PagedAttention enables:

• Support for longer context windows without exhausting GPU memory

• Higher concurrency by sharing memory more efficiently across requests

GQA and MQA — Reducing the Number of KV Heads

Standard transformer architectures use multiple attention heads. If a model has 32 attention heads, it typically stores 32 separate sets of K and V vectors, which significantly increases memory usage.

Grouped-Query Attention (GQA) and Multi-Query Attention (MQA) reduce this overhead by sharing KV representations across multiple attention heads.

Instead of every head maintaining its own K and V vectors, several heads reuse the same ones.

This change dramatically reduces the size of the KV Cache while preserving most of the model’s attention quality. The attention computation still happens across many heads, but the storage cost is much lower.

In effect:

• Traditional attention stores one KV cache per head

• MQA stores a single KV cache shared by all heads

• GQA sits between the two, grouping multiple heads per KV Cache

KV Cache Quantization — Compressing Stored Values

Another effective technique is to reduce the numerical precision of the KV Cache. Quantization stores K and V vectors in lower-precision formats such as FP16 or INT8 instead of FP32. This compression reduces memory usage by roughly 50% to 75%, often with minimal impact on model accuracy during inference.

Cache Offloading — Using Cheaper Memory Tiers

Not all KV Cache data needs to reside on the GPU at all times. Tokens generated earlier in a long sequence are accessed less frequently than recent ones. Cache offloading takes advantage of this by moving older KV blocks to cheaper memory tiers.

Typically, recent and actively used KV blocks remain on the GPU, while older blocks are transferred to CPU memory or even NVMe storage. When needed, these blocks can be fetched back, trading a small amount of latency for significant memory savings.

Thanks for the simple walkthrough for the good 😊